Implementation Research, Make It a New Year Resolution

In the last couple of posts, I have written about Implementation Evaluation. As discussed in the online article posted on BJM, Implementation research: what it is and how to do it, the objectives of implementation research differ based on what one needs to learn more about.

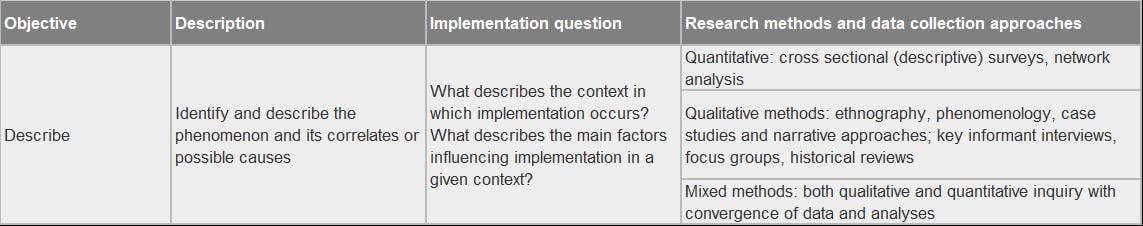

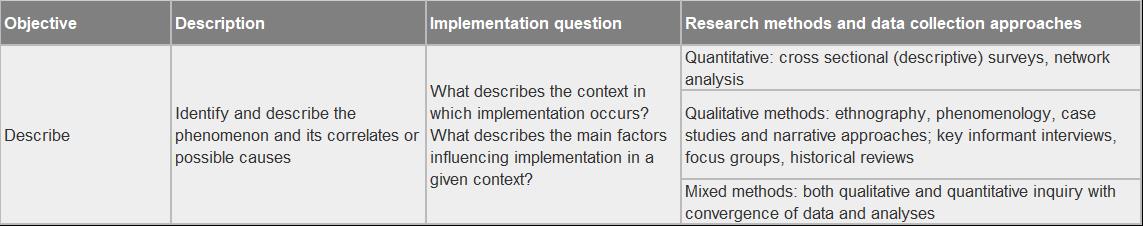

The ‘Describe’ implementation research objective seeks to gain a more robust understanding of the context surrounding program implementation (a partial screen capture of Table 2 from the article is shown below). For energy conservation programs, context can include things like baseline condition and equipment saturation, product availability, customer likeliness to adopt program desired actions, market conditions, or trade ally network infrastructure.

Considering the opportunities to collect data and information during the program delivery, doesn’t it make sense to embed research right into implementation activities? Let’s consider an upstream buy-down program. Program MOUs require participating retailers to provide sales data for qualifying equipment to receive program incentive payments. How many programs request sales data on non-qualifying equipment (with an incentive to the retailer) to assess the market share of program qualified equipment? Analysis of this data can offer powerful information for assessing market transformation. This data, coupled with embedded retailer and end-use customer surveys over the course of delivery, can provide rich context for assessing the impacts of upstream programs.

This is just one simple example. There are limitless ways to get meaningful data faster – without breaking the bank.

In the Next Issue

The other implementation research objectives discussed in the BJM article are: influence (with adequacy, with plausibility, with probability), explain, and predict. In the next few issues, I will discuss the implementation research objectives and how each can support a more insightful research and evaluation effort for energy conservation programs.

About This Blog

We are on the brink of an evaluation renaissance. Smart grids, smart meters, smart buildings, and smart data are prominent themes in the industry lexicon. Smarter evaluation and research must follow. To explore this evaluation renaissance, I am looking both inside and outside the evaluation community in a search for fresh ideas, new methods, and novel twists on old methods. I am looking to others for their thoughts and experiences for advancing the evaluation and research practice.

So, please…stay tuned, engage, and always, always question. Let’s get smarter together.