Implementation Research: Explain and Predict

In the last couple of posts, I have written about implementation research as discussed in the online article posted on BJM, Implementation research: what it is and how to do it. "Implementation research aims to cover a wide set of research questions, implementation outcome variables, factors affecting implementation, and implementation strategies.”[1]

To date, I’ve introduced the ‘explore’, ‘describe’, and ‘influence’ objectives. During the ‘explore’ phase, researchers develop a hypothesis about the outcome of a program intervention. The ‘describe’ objective builds upon the hypothesis, seeking to understand the context in which implementation occurs. The ‘influence’ objective tests the hypothesis – the program theory.

Once a program’s influence has been demonstrated, explaining why and how the influence occurs supports the ability to predict what will happen in future iterations of implementation. I love this! I used to do ‘explaining and predicting’ when working for a utility. Well, the economist I hired also did this.

This brilliant economist built a predictive model to assess the influence of various programs, including DSM programs, on our key accounts’ satisfaction with the utility. Having this model in place, combined with action plans linked to the drivers of satisfaction, increased our third-party[2] implemented key account satisfaction survey ranking from the bottom of the second quartile to the top decile in 18 months. This implementation research embedded within our delivery of key account programs, coupled with key account manager SMART[3] goals, drove this improvement.

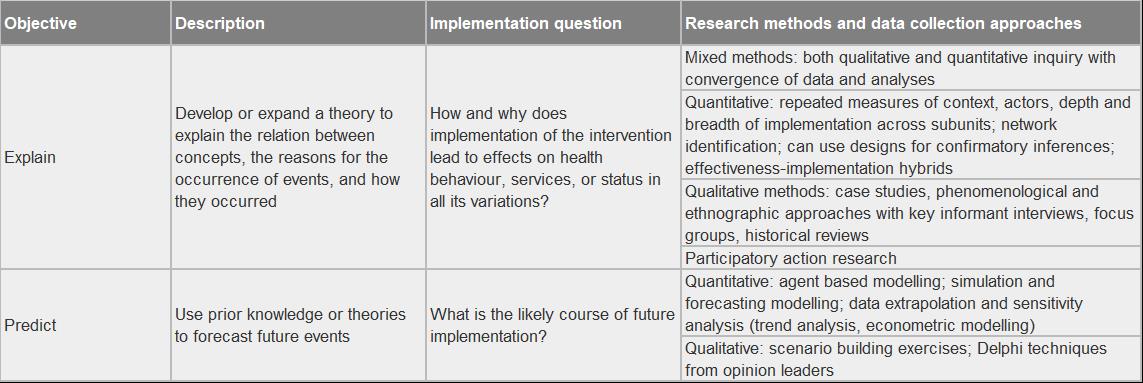

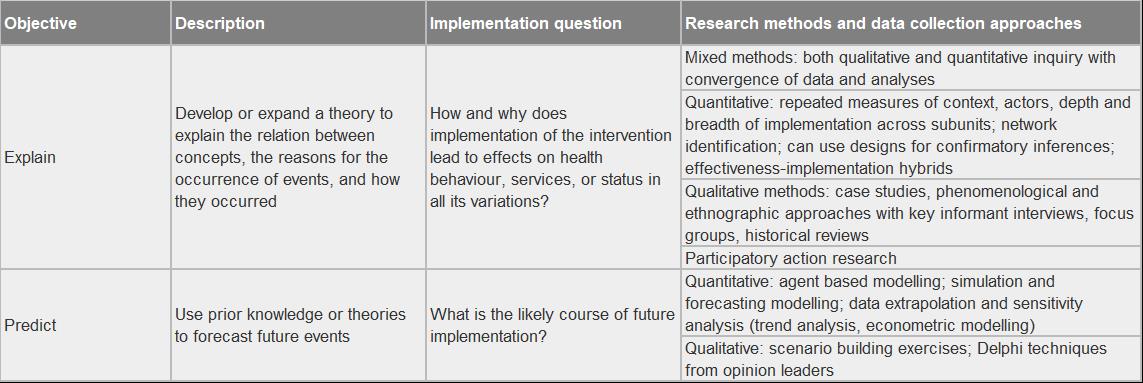

The table below summarizes the explore and predict objectives for implementation research.[4]

In the Next Issue

In the next issue, I will tackle the term ‘actionable intelligence’...unless a more pressing topic pops up.

[1] Peters, D. H., Adam, T., Alonge, O., Agyepong, I.A., & Tran, N. (2013). Implementation research: what it is and how to do it. BMJ 2013;347:f6753. https://doi.org/10.1136/bmj.f6753

[2] https://www.linkedin.com/company/tqs-research/about/

[3] Although there are various designations for the letters in the SMART acronym, we used Specific, Measureable, Actionable, Realistic, and Timely.

[4] Peters, D. H., Adam, T., Alonge, O., Agyepong, I.A., & Tran, N. (2013). Implementation research: what it is and how to do it. BMJ 2013;347:f6753. https://doi.org/10.1136/bmj.f6753, Table 2.

About This Blog

We are on the brink of an evaluation renaissance. Smart grids, smart meters, smart buildings, and smart data are prominent themes in the industry lexicon. Smarter evaluation and research must follow. To explore this evaluation renaissance, I am looking both inside and outside the evaluation community in a search for fresh ideas, new methods, and novel twists on old methods. I am looking to others for their thoughts and experiences for advancing the evaluation and research practice.

So, please…stay tuned, engage, and always, always question. Let’s get smarter together.